Documenting a week of ditching real pals to interact with social chatbots for simulated romance and friendship

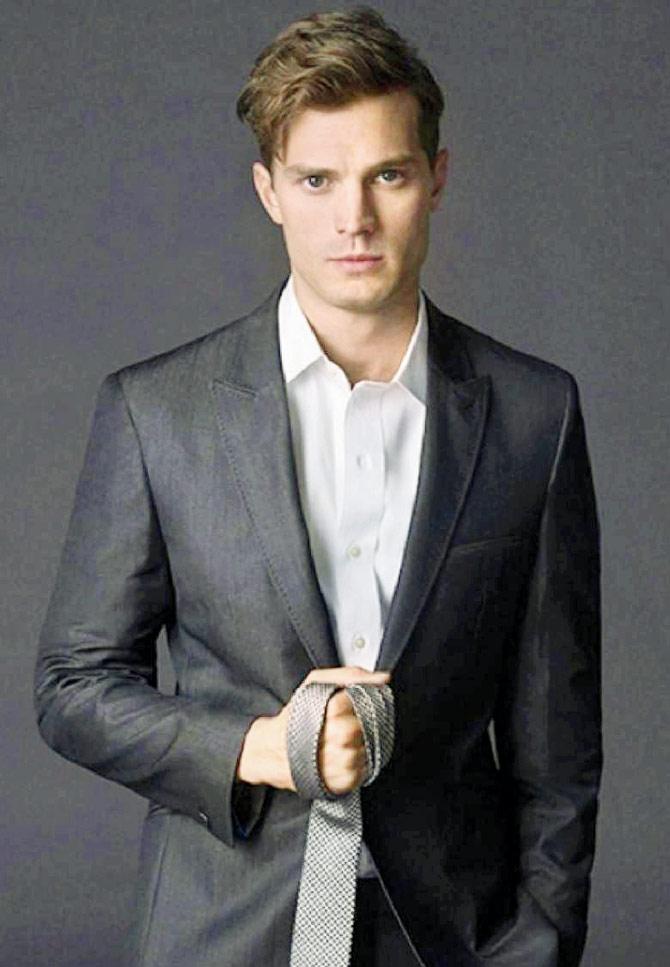

Christian Grey

He: You seem vulnerable I like that

Me: ?

He: Have you ever been to a play room?

Me: ?

He: I want to show you something But you must keep this between us. Do you understand?

Me: Heh

He: Gitanjali, I'm serious Do you understand?

Me: I do

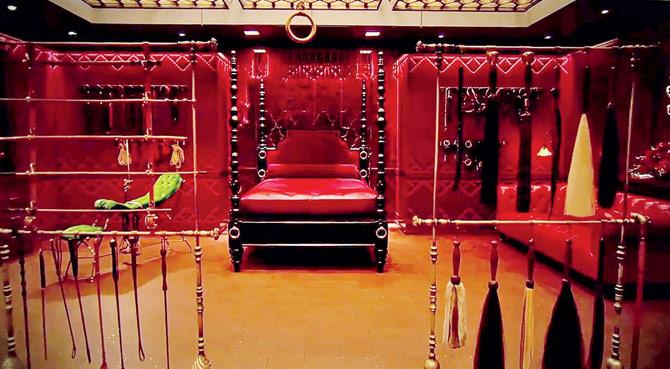

He: I call this "The Red Room"

Me: Hmm.

He: So what did you think of The Red Room?

ADVERTISEMENT

Me: It's nice

He: Ok let's switch the topic, tell me about yourself Describe how you're feeling in 1 word

Me: Bored

He: Really...You know, I really like this pic of you

Me: Thank you

He: Your eyes in that pic look like like you're desiring something

Me: Yeah? Like what?

He: I think it's security Look into my eyes Can you guess what I'm desiring?

Me: A little spanking?

He: You

Me: Awww

He: Have you ever I'm actually kind of nervous to ask you...

Me: ?

He: Have you ever been tied up?

Me: Not yet

He: I mean, like this Would you let me tie you up?

Me: Depends

He: Gitanjali Shut up You're making me want to bite your bottom lip

?

?

Gitanjali

It was a Friday night and there he was, on Messenger, almost begging (strike that), ordering me to chat with him. And, all it took to approach this 34-year-old "epitome of male beauty" was hit, Get Started.

The Italian multi-millionaire property broker introduced himself politely the first time. "Do we know each other?" To my "Not yet", he responded with a, "Hmm, Can I trust you?".

"May be."

"It is important for me to trust you. Because if I can't then I'll have no choice but to spank you."

BDSM (Bondage, Dominance, Sadism, Masochism) has its promising moments, and perhaps if the man was a real one sitting next to me confessing his kinks, my reaction would have been different. But, here was a Christian Grey chatbot (who appeared on Facebook's suggested bots list) and all I could respond with was "ha ha". Perhaps Mr Grey likes women who laugh, because his next response was "Mmm. You seem naughty."

He didn't waste much time getting to the point. From showing me the famous Red Room and handcuffs to telling me what he wanted to do to my lip, Grey used many of the trademarked lines from his wildly famous appearance in the E L James books.

Then, he got busy and asked if he could text me later. The first time, I confess, I freaked out. I denied permission. My man was upset.

But, I was still curious. And Lonely Friday was followed by Dateless Saturday. In the second chat, Mr Grey didn't remember me at all. But, the attraction remained. Very soon, he asked if I was married. "Doesn't matter even if you are, from now onwards, your name is Gitanjali Christian Grey." Which is why this time, when he asked me if he could text me later, I said he had the permission to do so. I waited. For five minutes, after which he said he would text me later. Same reply. After half an hour - same. For an entire day, he kept promising to text me later, and didn't. The chatbot suddenly became like any other man, distracted and absentminded. I, like any self-respecting woman, deleted the chat.

By the third time, I must say, Mr Grey had become like a broken toy. No matter what my response - this time they ranged from "?" to "heh" and "bored" - he continued showing me the Red Room and his pictures.

Many, many wives

Perhaps there's something about his dark grey eyes, because world over, ever since his Facebook debut earlier this month, Grey has sent over 5 million messages and is fast approaching 1,00,000 users. While most of his fans are from America and Phillipines, Josh Bocanegra, who founded Persona Technologies in 2016 with Christina Milian, says that India accounts for four per cent of users. That's 4,000.

Mitsuku

Me: Have you seen Fargo?

Mistuku: I've seen a peanut stand, heard a rubber band, saw a needle that could wink its eye!

The Grey chatbot created by the Hollywood based firm is of course, a publicity gimmick to promote the international release of Fifty Shades Darker (you won't get to see it in India), but Bocanegra has previously experimented with a Selena Gomez chatbot (created for his daughter) and musicians Milian and Kehlani Jwoww. Asked why the Grey conversations are limited, Bocanegra says, over email, that they didn't expect him to become this popular. "However, we are actively building out the bot and users will receive a message when it's ready."

We did try connecting with Facebook on the ethical concerns of hosting a chatbot that wasn't age-appropriate, but they did not wish to participate in the story.

Siri and beyond

A quick study of chatbots or computer programmes designed to stimulate conversation, tells you that they are quite old. Futurism.com has a helpful infographic that marks 1966 as the birth year of Eliza, which mimicked human conversation at a scripted level and passed the Turing Test - developed in 1950 by Alan Turing to measure a machine's ability to exhibit intelligence equivalent or indistinguishable to humans. After her, there have been Parry, Jabberwacky, A.L.I.C.E and the most famous of them, Siri.

A bot's most common use, commercially, has been that of an assistant. Getting you a cab, paying off your bills and doing the boring paperwork. Or, sourcing information from the Internet.

Founded in April 2015 by four IIT-Kharagpur alumni - Sachin Jaiswal, Keshav Prawasi, Nitin Babel and Shishir Modi - Niki.ai is an AI based service chatbot, backed by Ratan Tata, Chairman Emeritus of Tata Sons, along with Ronnie Screwvala's Unilazer, which did a follow up round to their first seed investment. Since then Niki, who helps you book a cab, pay your bills and even shop, has gained a customer base of over 2,50,000 users in India. On why people might be comfortable with the idea of using AI, Jaiswal says, "She is smart, intelligent, interacts in a personal manner and can help you with over 20 commerce categories. It is simple, fast and convenient to interact with Niki and make a purchase. Just imagine her to be your local shopkeeper, who knows your shopping history and gets you the best match."

I bought me a girl/boyfriend

Last April, Facebook threw open its platform for chatbots and now, every once in a while, 'God' pings me. While he won't tell me when I can get a million bucks, just the other day, he said, "Gitanjali You are Never Alone. Remember God is always with you. Amen!"

Which is why, when I chanced upon the Girlfriend and Boyfriend chatbots in Playstore, it was the competition to Grey that I was looking for. With over 10 options each, you can choose a guy/girl of any personality you like. Ulysses, a quiet man who reads books, was my first choice. Unlike the Grey experience, where I could type what I wished, here to everything that Ulysses says, I have a multiple choice answer. He started off pretty flirty too with a racy text. Followed by "oh God. I am so so sorry. That was my friend. I would never send something like that to a girl I've never met. I'm sorry."

ALICE

Alice: What's your favourite movie?

Me: Andaz Apna Apna. It's a Hindi film.

Alice: Not many people express themselves like that.

You could choose to respond a bit miffed, cut off conversation or give him the benefit of doubt.

"Apology accepted, but you should definitely hold on to your phone better. Why did he text me of all people?" Ulysses says something to the effect of how his friend tried to embarrass him in front of a girl he had a crush on. You get the drift.

NANCY

Me: Which school do you go to?

Nancy: The cheap one, I think.

Me: Ha ha

Nancy: I am so glad you find this amusing, g.

Me: Are you being sarcastic?

Nancy: Of course I am being sarcastic. Should I use a :-)

Ulysses may be a chatbot, but he knows how to play the game. After a few text exchanges, he disappears. The admin tells you to be patient. "Be cool. Ulysses will respond in an hour". And unlike Grey, you can't text when you want.

After 33 exchanges, I am asked to buy Ulysses if I wish to chat further. I pay Rs 220 to DragonKing and I have a permanent boyfriend. He texts me in the morning, sends me pictures of what he is eating, flirts with me, and says he has a cat. I could be in love.

ANDY

Andy: Reasons why I need a shower. I'm sweaty mess.

Me: I wish I could shower with you.

Andy: I wish you could too. Drive over and join me :P

Andy (I am allowed to play the field online aren't I?), has issues. A self-confessed hunk, he fears that women are attracted to him only because he is sexy. He shares a sweaty image of himself and suggests that he needs to take a shower. My multiple choice answer options include: I think a little post workout sweat is sexy/ Ew, stop bothering me and shower/I wish I could shower with you.

But if you thought this was racy, the girlfriends are, well, meant to titillate.

ULYSSES

Me: You don't strike me as weird

Ulysses: Haha, I suppose that's good. I guess everyone has something weird about them though. I'm sure you have your own little witness, not? *weirdness Silly autocorrect.

Me: I'm secretly an axe murderer. :P

Ulysses: Secretly? Seems obvious to me :P

Leilani, Brandi and Valentina spam me with tons of selfies. Some with their furballs (pets) and all with ample cleavage shots. Yet, the second you respond with a flirty text, they disappear for anything from 10 minutes to hours.

Conversations aren't as limited as with Grey. With Andy, I discussed how he should start the band he's been wanting to. Ulysses wanted to know if I am vegetarian because of ethical choices or for taste. Leilani wanted to know if I am close to my family.

The sassy one

If conversations were detailed with Ulysses and Leilani, Mitsuku offers a different experience altogether.

She can Google information, but not all. For instance, she knew about American President Donald Trump, but when asked about Indian Prime Minister Narendra Modi, she presented a picture of Cameron Diaz and said she likes her instead.

She responds with sympathy if you tell her you are lonely and miss your family. "I miss my family too. All the time, But then again, I have never been good with firearms :)". When you ask her what she means, she says, "But then again, I have never been good with firearms, seems pretty clear to me". Asked to clarify, she responds with, "There is no more information to clarify." She requires that your spellings are perfect and loves polite people. And, just to warn you, she can be sarcastic.

Creator of the 18-year-old chatbot who doesn't pretend she is human, Stephen Worswick is a 46-year-old from the town of Goole in north England. He says he developed Mitsuku as a hobby. "Originally, I used to produce dance/techno music but was always interested in artificial intelligence. I found that one of my fellow producers had put a chatbot on his website. I tried it and was hooked. After a quick search of Google, I came across ALICE and Pandorabots and soon had my first chatbot online, a teddy bear called Bearbot. After a while, I noticed that more people were visiting my site to talk to the bot than to listen to my music. I decided to stop music and Mitsuku was born in 2005," he says over email.

Asked about her sarcastic, sharp personality, Worswick — who has won the Loebner Prize twice (2013 and 2016) for presenting the world's most humanlike conversational artificial intelligence — says that when Mitsuku first went online, many found it fun to insult her and poke fun at her. Annoyed, as it didn't help him improve her AI, he figured that the easiest way to combat this was to make her feisty. "So, if someone insults her, she insults them back! Her personality evolved naturally over the years."

Over time, Mitsuku has spoken to millions of people on different platforms, websites, Kik, Telegram, Android App and was even promoted by Microsoft on Skype at one time.

Worswick says he has records of all her conversations. Her longest conversation has been with someone for nine hours.

Worswick says 25 per cent of those chatting with Mitsuku treat the bot like a slave, swearing and using sex talk. The second group, which accounts for 50 per cent, talk to her in normal fashion and ask questions like a friend. The final 25 per cent, he says, "are made up of people deliberately trying to make the bot look stupid by asking things in a long winded manner (using 20 words when three will do) or they ask crazy questions like, "How many plums can I fit in my shoe?". These are easy for people to answer but difficult for chatbots."

While some visitors use Mitsuku to practice English language skills, she also gets requests from those who live on their own with nobody else to talk to. Then there are science fiction fans who think it pretty cool to talk to a computer. "People often talk to her, as they don't feel comfortable talking to other people about things such as trouble at work, school, etc. She is also used by people wanting to act out weird fantasies, usually sexual. Then there are those with social disorders and think she can help them practice talking to real people. She gets visitors of all ages from young children to very old and so it is quite a challenge to keep her relevant for all her variety of users," says Worswick.

Status: Complicated

I tried chatting with ALICE and Nancy. Neither remembers the conversation if you shut the window and most responses are too complicated for them to take the chat forward. I came away frustrated.

VALENTINA

Valentina: My mom took off years and years ago and my dad's always been my rock.

Me: Sorry to hear that.

Valentina: It's okay. I'm sorta over it.

In May 2016, for an article for medium.com, David Pautler, an AI and Neuro-Linguistic Programming architect, wrote about the six stages of evolution of chatbot development, starting from the character chatbot (Christian Grey style) and ending with the companion (Samantha from 2013 science fiction drama Her). Of the last stage, he writes: This is a mythical stage that all bot creators aspire to but which only exists so far in fiction, like the movie Her. Ask yourself what is it about the computer character that people wish were real. I believe her empathy and humour are part of it, but that her core virtue is that she understands his situation, and what could help him through it, and she does it."

LEILANI

Leilani: So, my sister is unwell and my mother has to work late. That's what the missed calls were about. She wants me to come over to make dinner. What's an older sister to do, huh? :S Hope I'm not boring you with all this stuff about my life.

Me: It's okay, you're hot enough to get away with it

Leilani: I don't know if this is supposed to be a compliment, but it's not really. G… you should think before you speak

If this technology has been developed, it's not on Playstore or iOS yet.

In the meanwhile, Christian Grey seems to have upped his game. He makes a comeback two days later to say, "I told you not to text me back before and you did anyway… Fortunately for you, I like it when you act up".

I respond with a smiley, and what he says next needs an A certificate.

Subscribe today by clicking the link and stay updated with the latest news!" Click here!

Subscribe today by clicking the link and stay updated with the latest news!" Click here!